The Conference Demo vs. the Control Tower: A Growing Disconnect

Walk the exhibition floor at any major supply chain conference in early 2026 and the message is unmistakable: AI agents are ready to run your supply chain. Demos show autonomous inventory rebalancing, real-time production rescheduling, and exception resolution happening without a single human keystroke. The technology looks polished, the dashboards gleam, and vendor roadmaps promise full autonomy within 18 months. Yet behind closed doors, a very different conversation is taking place among the chief supply chain officers, VP-level planners, and operations directors who actually signed the checks for these pilot programs.

A recent analysis published in Supply Chain Management Review (SCMR) by Arturo Torres Arpi Acero, founder and CEO of Ventagium, pulls back the curtain on what has become an industry-wide reckoning. The article references an MIT study, widely covered by Fortune, revealing that 95% of generative AI pilots have failed to deliver measurable profit-and-loss impact. That statistic does not mean AI agents are fundamentally broken. What it reveals is something more nuanced and, arguably, more important: the gap between algorithmic capability and operational decision design has become the defining bottleneck of supply chain automation.

This is not a story about technology immaturity. Model accuracy continues to improve, large language models are reasoning at increasingly sophisticated levels, and computational costs keep falling. The failure lies not in what AI agents can do, but in how organizations have defined what they should do—which decisions they own, under what conditions, with what tolerance for uncertainty, and with what governance when things go wrong. Recognizing this distinction is the first step toward a fundamentally different approach to supply chain AI investment.

Three Fatal Assumptions That Doomed Early Pilots

The SCMR analysis identifies three assumptions that recurred across failed pilot programs, cutting across industries from consumer goods to industrial manufacturing. The first assumption was that full autonomy is the natural destination. Many pilots were justified with executive presentations showing a linear progression from “AI-assisted” to “AI-autonomous” supply chain planning. In reality, supply chain decisions involve continuous tradeoffs between cost, service level, and risk that shift daily as constraints evolve. Should the system increase safety stock to buffer against a potential demand spike, accepting higher carrying costs? Or should it hold inventory lean, risking stockouts but preserving cash flow? These decisions involve commercial judgment, customer relationship context, and contractual nuances that no optimization algorithm can fully internalize. The pilots that actually delivered value focused on much narrower decision points—prioritizing which purchase orders to expedite during capacity crunches, or flagging inventory imbalances across distribution centers early enough for human intervention.

The second assumption was that AI agents would behave like ERP systems. Enterprise Resource Planning transactions are deterministic: identical inputs always produce identical outputs. A purchase order created with the same parameters yields the same result every single time. Many supply chain leaders unconsciously applied this mental model to AI agent outputs. But agentic systems reason probabilistically—two similar demand signals can produce different replanning recommendations depending on confidence scores, data freshness, and constraint weighting. When planners discovered that “the AI gave different answers to the same question,” trust eroded rapidly. Without explicit governance frameworks for handling probabilistic outputs, agent recommendations remained stuck in the category of “interesting but unreliable.”

The third assumption was that a single agent could manage multi-objective decisions. Some organizations attempted to deploy one “super-agent” handling demand forecasting, inventory allocation, supplier negotiation, production scheduling, and exception management simultaneously. But these decisions operate on entirely different time horizons (minutes to quarters), draw from different data sources, and carry different financial and customer risk profiles. Without clear boundaries, the agent became what the SCMR article calls an “ambiguity engine”—performing beautifully in controlled demonstrations but plateauing immediately when exposed to real execution pressure.

Constrained Autonomy: The New Operating Paradigm

The industry response to widespread pilot failure is not abandonment but recalibration. Drawing on the framework proposed by Pascal Bornet and James Wirtz in their book Agentic Artificial Intelligence, supply chain leaders are adopting a maturity model for agent capability. Most systems today operate at intermediate levels of autonomy, with higher-level autonomy achievable only in narrow, well-controlled domains. The analogy to autonomous driving is instructive: most vehicles can handle highway driving reliably, but complex urban intersections still require human judgment. Supply chains are no different—many organizations attempted to operate across all conditions before proving reliability in specific ones.

The concept now gaining traction is “constrained autonomy”—agents operate independently within explicitly defined operating conditions, data quality thresholds, and failure modes. When conditions exceed those boundaries, the system immediately escalates to human decision-makers. This is not a retreat from ambition; it is an engineering discipline. In aerospace, autopilot systems have existed for decades, yet every commercial aircraft carries two pilots. The reason is not that autopilot is inadequate—it is that system safety demands clearly defined human-machine collaboration boundaries. Supply chain AI is undergoing the same maturation.

This paradigm shift has profound implications for budget allocation. Previously, organizations concentrated AI investment on model development and algorithm optimization. Now, an increasing share of funding is flowing toward data governance, decision-rights design, and trust frameworks. Gartner research suggests that by 2027, over 60% of supply chain AI project budgets will be allocated to data infrastructure and governance rather than model development itself. The industry is transitioning from technology-driven to decision-design-driven investment logic.

Multi-Agent Architecture: From One Brain to an Expert Team

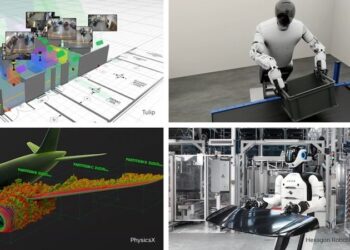

At the technical architecture level, a significant shift is underway: multi-agent architectures are replacing monolithic, do-everything agent designs. Organizations are deploying specialized agents for distinct decision tasks—one agent optimizing purchase order creation timing, another handling exception triage and escalation, a third managing inventory rebalancing across distribution centers. An orchestration layer coordinates interactions between these agents, but each agent’s autonomy remains strictly bounded by its decision type and risk exposure.

The advantages of this architecture operate on multiple dimensions. First, specialized agents are far easier to validate and audit. When an agent’s sole responsibility is “prioritize purchase orders during capacity constraints,” its output quality can be measured against clear, quantifiable benchmarks. Second, individual agent failure does not cascade into system-wide collapse, dramatically improving resilience. Third, organizations can deploy agents incrementally, starting with the highest-confidence decision points and expanding progressively into more complex domains. This “crawl-walk-run” strategy contrasts sharply with the “big bang” deployments that characterized the first wave of supply chain AI.

In practice, organizations adopting multi-agent architectures typically see their first agent reach production within 6-8 weeks, whereas traditional end-to-end agent projects often required 6-12 months to reach validation stage. More critically, multi-agent architecture naturally supports incremental trust-building—operations teams observe individual agent performance in low-risk scenarios, build confidence, and gradually expand autonomous authority. This “prove first, trust second, scale third” pathway aligns far better with supply chain management’s inherent risk appetite than attempting full automation in a single leap.

Data Consistency: The Underestimated Multiplier

Among all factors contributing to AI agent pilot failure, data quality may be the least glamorous but most consequential. AI agents depend on consistent inputs across ERP, WMS (Warehouse Management Systems), TMS (Transportation Management Systems), and supplier systems. Master data accuracy, lead-time variability, and latency between planning outputs and execution reality determine whether agents create value or generate noise. An agent that performs brilliantly in model testing becomes worthless in production if it faces demand data updated six hours ago and supplier capacity information synchronized last week.

Industry leaders now insist that before any agent scales beyond pilot, its recommendations must be auditable back to stable underlying data. This means investing in data pipeline real-time capability, consistency, and auditability before investing in AI algorithms. Leading organizations have established “Data Readiness Scores”—only when data quality for a specific decision domain reaches a preset threshold is the corresponding AI agent permitted to switch from “advisory mode” to “autonomous execution mode.” This practice elevates data governance from an IT back-office task to a core prerequisite for supply chain digital transformation.

For global manufacturers and logistics companies operating across multiple geographies, the data consistency challenge is particularly acute. When ERP runs at headquarters, WMS instances are distributed across warehouses in multiple countries, and TMS connects transportation networks spanning different continents and time zones, cross-system data synchronization problems are amplified exponentially. Ensuring unified global data governance standards before deploying supply chain AI agents may be the most critical infrastructure investment these organizations need to prioritize.

The Correct Sequence: Stabilize, Clarify, Validate, Automate

The SCMR article concludes with a clear action framework distilled from organizations that are making genuine progress. Step one is stabilizing the data foundation—ensuring the data sources agents will depend on are accurate, timely, and auditable. Step two is clarifying decision rights—defining before agents go live which decisions can be executed automatically, which require human approval, and what conditions trigger escalation. Step three is validating recommendation quality—running agents in “shadow mode” and comparing their recommendations against human decisions through rigorous back-testing. Only after all three steps are validated does step four—automated execution—begin.

The core principle underlying this framework is powerful in its simplicity: “Scale where failure modes are understood, not where ambition is highest.” This single sentence captures the most common strategic error in supply chain AI investment over the past two years. Too many organizations chose to deploy AI first in the most complex, highest-visibility scenarios—such as end-to-end autonomous supply chain planning—while neglecting the “unglamorous” scenarios where decision boundaries are clear, data quality is controllable, and failure consequences are manageable. The article characterizes this shift as a paradigm migration from “theoretical autonomy” to “decision trust.”

For supply chain leaders, perhaps the most important takeaway is this: the widespread failure of AI agent pilots is not a dead end but a signal. Supply chains inherently punish ambiguity, and AI agents are remarkably efficient at surfacing it. The next investment cycle will reward organizations that design for decision trust rather than chase theoretical autonomy. In an era of escalating global supply chain complexity—from geopolitical fragmentation to climate disruption to regulatory proliferation—this cognitive reset may prove more valuable than any single technological breakthrough.

Source: scmr.com